School of Education

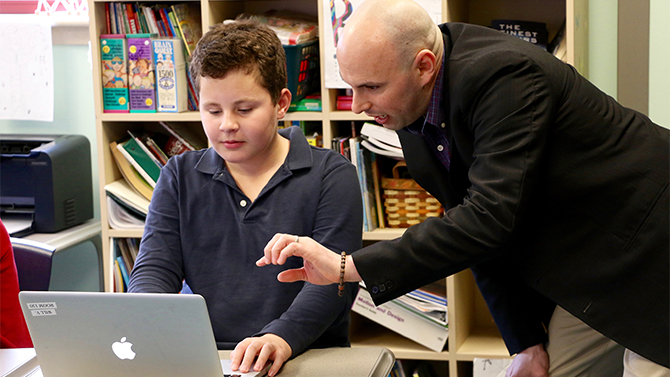

Students, teachers benefit from computerized essay assessment system

“Pick one place you’d like to go in the world and explain why.” This is an example of a typical assignment a fifth grade teacher may assign a class.

Once the papers are turned in, the teacher would then review 20-30 essays for clarity, grammar, sentence structure, use of vocabulary words, organization, idea development, elaboration and accuracy. Not surprisingly, students often wait several days before receiving feedback.

Now imagine a class where students type their essay into a software program that immediately flags lower-level writing errors like spelling, punctuation and formatting, and provides suggestions for improving organization, sentence structure and word choice.

Students are able to instantly correct errors, make revisions and resubmit their essays. Teachers can then focus their efforts on helping students to improve their critical thinking and higher-level writing skills.

The PEG Writing system, developed by Measurement Incorporated (MI), implements automated essay scoring (AES) through a number of formative assessment software products. This automated essay evaluation (AEE) software system is being used by nearly three-quarters of a million students in the United States and several other countries.

While researchers have investigated the reliability of scoring models, Joshua Wilson, assistant professsor in University of Delaware’s School of Education, is taking a different approach. His research focuses on evaluating the effectiveness of AEE on teaching and learning.

He is looking to determine if it helps students–particularly those who are at risk of failing to achieve grade-level literacy standards–improve their writing skills.

Writing instruction is gaining importance

Under the federal No Child Left Behind policy, writing instruction took a backseat to reading and math. With the introduction of Common Core standards and associated literacy assessments (e.g., Smarter Balanced) writing has once again been brought to the forefront.

“The majority of U.S. students lack sufficient writing skills,” said Wilson. “Roughly two-thirds of students in grades four, eight, and 12 fail to achieve grade-level writing proficiency, as measured by the National Assessment of Educational Progress (NAEP).”

Why does this matter? “Students with weak writing skills are at a greater risk of dropping out of school or being referred to special education programs — potentially failing to secure stable and gainful employment,” said Wilson.

Yet teachers face many barriers with respect to teaching writing.

“A major impediment is the time it takes to evaluate student writing,” said Wilson. “Subsequently, many students do not get adequate practice or feedback to improve as writers.”

Wilson has found that PEG utilizes natural language processing to yield essay ratings that are highly predictive of those assigned by human raters. Consequently, students receive more thorough feedback.

Stacy Poplos Connor, a master teacher in The College School, started working with PEG after reading about it in UDaily. She has found it to be an incredible teaching and learning resource tool.

“The students receive immediate feedback on each of the main traits of writing,” she said. “If they are struggling with a particular genre and/or editing tool, they can watch videos embedded into the site or send me messages while they write.”

Findings of the research

For the past three years, Wilson has partnered with the Red Clay Consolidated School District to pilot and research PEG Writing in grades three-five, and select classrooms in the Red Clay and Colonial school districts’ middle schools. Collaborating with a number of UD graduate and undergraduate students, Wilson has published three studies focusing on the impact PEG has on both learning and instruction.

In the classroom, he has found:

- Students’ quality of writing improves in response to PEG’s automated feedback, and it appears to be equally effective for students with different reading and writing skills.

- Students with disabilities are able to use automated feedback to produce writing of equal quality to their non-disabled peers, despite writing significantly weaker first drafts.

- PEG can identify struggling writers early in the school year so they may be referred for intervention to remediate any skill deficits.

- Wilson also compared results of teachers using GoogleDocs versus PEG to evaluate its impact on writing instruction. The analysis revealed that:

- Students in the PEG Writing group demonstrated an increase in writing motivation. There were no changes in writing motivation for students in the GoogleDocs group.

- Teachers using GoogleDocs provided proportionately more feedback on lower-level writing skills, such as capitalization, punctuation, and formatting.

- While teachers using PEG Writing still commented on lower-level writing skills, they were able to provide more feedback on higher-level writing skills, including idea development.

“PEG allows me to pick a topic based on several genres while also incorporating my own teacher-created prompts that fit with my instruction,” said Connor. “It has definitely saved me time in grading and editing. I would highly recommend PEG to any teacher.”

Future research

Wilson is now piloting and researching PEG at George Read Middle School, Gunning Bedford Middle School and Castle Hills Elementary School in the Colonial School District. He is expanding his analysis to determine if the additional practice and feedback through PEG writing can help English language learners (ELL) in elementary and middle school pass the ACCESS for ELLs test.

Article by Harpreet Kaur

Photos by Liz Adams